Thesis Opportunities

A thesis is a genuine research experience — not a formality. Whether the goal is to work on open academic problems, collaborate with industry, or gain an international experience, there is a path here. Topics span AI, High-Performance Computing, and Large-Scale Deep Learning, and every thesis is carried out with close, continuous mentorship from the start.

Member of ELLIS, ELSA, and ELLIOT, and part of the MSCA ELLE network — connecting with leading research groups across Europe.

Frequent one-on-one meetings, structured feedback, and clear milestones — students are never left to figure things out alone.

Strong thesis work regularly leads to co-authored publications in top-tier venues — a concrete advantage for any career path.

Thesis work can continue into a PhD, a research contract, or an industry position — depending on each student's goals.

Types of thesis

Three main tracks are available, each with its own focus and opportunities.

An academic track focused on open research problems in AI, computer vision, vision-and-language, or HPC. Research theses are often connected to funded EU projects and may involve collaboration with other European groups. The best work leads to a scientific publication, and for those interested, can naturally continue into a PhD programme or a research contract.

Applied research in collaboration with industry partners, tackling real-world problems with direct impact. These theses combine rigorous methodology with practical relevance. The collaboration is structured to maintain scientific quality while addressing problems of genuine industrial interest, and often opens doors to employment opportunities at the partnering company.

An international experience at a partner university or company, with full co-supervision. These theses are well-suited for students looking to work in a different research environment, build an international network, and broaden their perspective — while maintaining continuity and support throughout the entire period.

Students who have independently identified a host company are also welcome to reach out — co-supervision can be arranged on a case-by-case basis.

Available proposals

Enhancing Vision Transformer Encoders for Document Understanding in Vision-Language Models

Modern Vision-Language Models can answer questions about documents — parsing charts, tables, figures, and text all at once — but they largely fail to explain where in the document their answer comes from. Heatmaps produced by current open-source models tend to highlight text regions, missing the richer visual evidence encoded in graphs and figures. Closed-source models offer no grounding at all. This thesis investigates how to make document VLMs both more accurate and more interpretable, enabling them to pinpoint the visual evidence behind each answer.

The approach combines improved vision encoder training — through contrastive learning on question-answer triplets and caption-based supervision inspired by CLIP — with attention-based visual attribution at inference time. The result should be a model that not only answers correctly but produces semantically meaningful heatmaps over complex document elements such as charts and figures. The work is aligned with our ongoing research on multimodal models and is expected to lead to publications at top venues.

🟩 Can lead to research publications

🕐 Only one position remaining

Posted: 04/05/2026

Spatial Control of Subject Placement via Massive Activation Steering in Diffusion Transformers

State-of-the-art image generators like FLUX and Stable Diffusion 3 are powerful but offer limited control over where subjects appear in the generated image. This thesis exploits a surprising structural property of Diffusion Transformers: a small subset of high-magnitude activation channels encode spatial layout information from the very first denoising steps, acting as anchors that determine where visual content is ultimately placed in the image.

By directly manipulating these activation maps during inference — with no training, no fine-tuning, and no architectural changes — the thesis will develop a lightweight method for steering subject placement to user-specified regions. The goal is a practical and interpretable spatial controller compatible with any MMDiT-based model such as FLUX or Stable Diffusion 3. The work contributes to the growing field of training-free generation control and is part of our ELLIOT EU project on personalization of foundation models.

🟩 Can lead to research publications

🕐 Only one position remaining

Posted: 04/05/2026

Gradient-Steered Diffusion via Latent Verification

Controlling what a diffusion model generates is still an open problem. Generating many candidates and picking the best is expensive; backpropagating through a heavy VLM to steer generation is slow. This thesis proposes a leaner alternative: a lightweight verifier operating directly in the DiT's internal representation space, whose gradient signal can be injected into the noisy latent at inference time — nudging generation toward a target property without decoding to pixels and without a large external model in the loop.

The student will build and evaluate this mechanism on top of modern DiT models such as FLUX Schnell, studying how gradient strength, layer choice, and timestep placement affect the trade-off between control precision and image quality. The work is directly connected to our ongoing research on inference-time scaling with latent verifiers and is expected to lead to scientific publications.

🟩 Can lead to research publications

🕐 Only one position remaining

Posted: 04/05/2026

Understanding and Controlling What Diffusion Models Generate

What does a Diffusion Transformer “think” as it generates an image? This thesis investigates the internal activation space of models like FLUX to understand how safe and unsafe content is encoded in their hidden representations — and how targeted, training-free interventions can steer generation away from harmful outputs without sacrificing image quality.

The approach identifies a safety direction in the model's activation space from paired safe/unsafe generation examples, and uses it as a steering signal at specific transformer layers during inference. Preliminary experiments show that small targeted adjustments can effectively suppress unwanted content, while excessive intervention degrades image quality — making steering calibration an interesting open research question. Beyond safety, the thesis will explore how the same framework applies to broader content control and mechanistic interpretability of generative models.

🟩 Can lead to research publications

🕐 Only one position remaining

Posted: 04/05/2026

Finding the Best Embedding Inside a Multimodal LLM — Without Training

All current multimodal embedding models pool their representations from the final layer of a large LLM — but is the last layer really the best for image-text retrieval? This thesis investigates whether specific (layer, head) combinations inside a pretrained MLLM already outperform final-layer pooling for cross-modal retrieval — with no additional training. If an intermediate head outscores the final layer, the result is simultaneously a better retrieval model and a faster one: all subsequent computation can be discarded, potentially cutting inference cost by up to two-thirds.

The student will analyse representations across all layers and heads of models such as Qwen2.5-VL using Mutual K-Nearest Neighbor scores — interpreted as the Hit@K metric for retrieval — and evaluate on MMEB, a comprehensive multimodal benchmark covering 36 tasks including retrieval, classification, and visual grounding. The work is part of our ongoing research on multimodal visual analysis and is expected to lead to scientific publications.

🟩 Can lead to research publications

🕐 Only one position remaining

Posted: 04/05/2026

Hierarchical Scalable Vector Graphics Generation

SVG is the format of choice for resolution-independent visual content — icons, logos, diagrams, illustrations — yet generating high-quality SVGs with AI remains an unsolved problem. Unlike raster images, SVGs are structured symbolic sequences, and naively applying autoregressive or diffusion models to raw SVG markup fails at scale. This thesis takes a different approach: it operates in a compact learned latent space where each SVG path is encoded as a dense vector, making it possible to apply modern generative architectures at the level of visual primitives rather than raw tokens.

The student will design and evaluate generative models — exploring both diffusion-based and autoregressive formulations in latent space — capable of synthesising diverse, high-quality SVGs from scratch or from text prompts. Applications span design automation, data visualisation, and creative AI. Strong results are expected to lead to scientific publications at top computer vision venues.

🟩 Can lead to research publications

🕐 Only one position remaining

Posted: 04/05/2026

AI-Assisted CAD Design: Retrieval and Generative Modeling of Floor Plans for Accelerated Design

Starting a furniture layout or interior design from a blank canvas is slow and demanding. This thesis, developed in direct collaboration with Maticad, investigates how AI can accelerate the early stages of CAD design by proposing intelligent starting points: given a partial floor plan or a set of constraints, the system retrieves similar past designs from a database and generates alternative layouts that respect spatial and structural requirements.

The student will develop methods for embedding and comparing CAD layouts, build a retrieval-augmented design pipeline, and explore generative models — including graph-based approaches and diffusion architectures — for constraint-aware layout synthesis. The project leverages a real-world dataset of interior designs provided by Maticad, offering a rare opportunity to work on AI problems with direct industrial impact. Natural language interaction as a front-end interface for the design assistant is also on the table as a longer-term direction.

🟩 Can lead to research publications

🕐 Only one position remaining

Posted: 04/05/2026

World Model-Guided Safety Filtering for Vision-Language-Action Models in Robotic Manipulation

Robots controlled by Vision-Language-Action (VLA) models can follow complex instructions — but what happens when an action risks a collision, damages an object, or puts someone nearby in danger? This thesis gives the robot the ability to imagine the consequences of its actions before executing them. A learned world model is placed between the VLA policy and the physical robot: before each action is sent to the hardware, the world model simulates the outcome over a short time horizon and intervenes if the predicted result is unsafe.

The student will design and evaluate this World Model-Guided Safety Filter in simulation (AI2-THOR, Isaac Lab) and on real robots — a Franka Research 3 manipulator and a Unitree G1 humanoid. The thesis is conducted as part of our collaboration with AMD Silo AI on open-source VLA and world model development for robotics, with access to AMD Instinct GPUs and state-of-the-art robotic infrastructure. This is a unique opportunity to work at the frontier of embodied AI with real hardware, with strong expected publication impact.

🟩 Can lead to research publications

🕐 Only one position remaining

Posted: 04/05/2026

SpatialVLA: Teaching Robots to Understand Spatial Language

“Pick up the red cup that is behind the bottle and place it to the left of the plate.” Instruction-following robots can recognize objects and generate grasps — but tasks requiring precise spatial reasoning like this one remain a genuine challenge. Current VLA architectures process spatial and relational language implicitly, leading to brittle performance precisely where precision matters most. This thesis investigates how to equip VLA models with an explicit spatial grounding module that computes geometric relationships, resolves spatial references, and incorporates them directly into action planning.

The student will design and evaluate SpatialVLA — an architectural extension to existing VLA models — on spatially demanding manipulation benchmarks, working with real robot platforms as part of our collaboration with AMD Silo AI on open-source robotic AI development. The work connects language understanding, geometric reasoning, and robot control, and is expected to lead to scientific publications at top robotics and AI venues.

🟩 Can lead to research publications

🕐 Only one position remaining

Posted: 04/05/2026

Teaching Robots to Handle Soft Objects: Fine-Tuning VLA Models for Deformable Manipulation

Robots are increasingly capable of picking up rigid objects — but fabrics, cables, sponges, and bags remain a persistent challenge. Deformable objects change shape unpredictably, are difficult to observe fully, and barely appear in the pre-training data of current VLA models. This thesis investigates how to efficiently adapt Pi0 — a state-of-the-art diffusion-based VLA that generates continuous action trajectories from vision and language — to deformable manipulation tasks on a real Franka Research 3 robot arm.

The student will study which parameter-efficient fine-tuning strategies (e.g., LoRA, adapter layers) best transfer Pi0's capabilities to deformable objects, characterise how many demonstrations are needed for reliable performance, and define a reproducible benchmarking protocol for this class of tasks. The work is conducted as part of our collaboration with AMD Silo AI, providing access to real robotic hardware and HPC infrastructure. Results are expected to contribute directly to a scientific publication.

🟩 Can lead to research publications

🕐 Only one position remaining

Posted: 04/05/2026

Beyond Autoregression: Exploration, Optimization, and Scaling of Diffusion Models for Text Generation

Text diffusion models represent a radical rethinking of how language is generated. Rather than producing tokens sequentially left-to-right — as all current LLMs do — diffusion models iteratively refine an entire sequence from noise, enabling bidirectional reasoning, parallel generation, and richer controllability. This paradigm has already shown promising results in addressing known autoregressive failure modes such as the reversal curse, where models fail to infer relationships in directions not seen during training.

This thesis will systematically evaluate text diffusion models against autoregressive baselines and explore directions — from improved sampling strategies to architecture choices and training objectives — to make diffusion a practical and competitive alternative for high-quality text generation. The work connects to our broader research on large-scale generative models and is expected to lead to scientific publications.

🟩 Can lead to research publications

🕐 Only one position remaining

Posted: 02/08/2026

Learning to Schedule: AI-Driven Optimization of SLURM Queues in HPC Systems

Efficient job scheduling is critical to maximizing the utilization and throughput of HPC systems. Traditional queueing policies in SLURM are often static and suboptimal under varying workloads. This thesis explores the development of learnable algorithms - such as reinforcement learning or graph-based predictors - for dynamically optimizing job scheduling within SLURM environments. The work involves collecting real-world queue traces, modeling job behaviors, and designing intelligent schedulers capable of adapting to system load, job priority, and resource availability.

🟩 Can lead to research publications

Posted: 04/21/2025

Multimodal LLMs for Structured Document Translation

This thesis explores the development of Multimodal Large Language Models capable of translating between rich-format PDF documents and ITX, a structured template format for generating PDF files. The activity involves training and evaluating vision-language models that can parse document layouts, understand visual and textual content jointly, and generate editable ITX representations. Applications span document automation, archiving, and accessibility. The work will focus on model design, fine-tuning strategies, and alignment between visual structure and template semantics.

🟩 Can lead to research publications

Posted: 04/20/2025

Deepfake detection algorithms

The proliferation of AI-generated Deepfakes poses serious threats to trust and security. This thesis will focus on the design and development of robust Deepfake detection algorithms, exploring both supervised and self-supervised approaches. Techniques can leverage multi-modal cues, visual artifacts, or anomalies in embedding spaces. The thesis can include benchmarking against existing datasets and participation in international challenges. This research is aligned with ongoing activities within the ELSA EU project.

🟩 Can lead to research publications

🕐 Only one position remaining

Posted: 04/20/2025

Agentic AI

Agentic AI refers to generative systems capable of autonomous goal-setting and reasoning, interacting with external tools and the environment. We aim to explore innovative architectures and training strategies for building novel agentic systems that can perform complex tasks. This includes the design of modular memory systems, multi-step reasoning chains, and self-reflective planning mechanisms. Applications span virtual assistants, robotic control, and automated research agents.

🟩 Can lead to research publications

Posted: 04/20/2025

Novel Retrieval-Augmented Generation Techniques

Retrieval-Augmented Generation (RAG) enhances the reasoning and factual consistency of generative models by grounding their responses in external knowledge retrieved at inference time. In this thesis, we aim to explore novel RAG techniques that improve end-to-end performance, coherence, and scalability. The thesis may involve designing new architectures, proposing improved training strategies, or benchmarking on open-domain QA, code generation, or document summarization. The activity is aligned with ongoing research in the context of foundation models and may contribute to publications and open-source tools.

🟩 Can lead to research publications

Posted: 04/20/2025

Trustworthy and Safe AI

Large-Scale architectures are trained on billion of web-scraped uncleaned items, and this might lead to unsafe or toxic behaviours. We aim at designing approaches for making Large-Scale models safe and trustworthy. This can be applied to both embedding models (e.g. CLIP) or LLMs. The activity can also include the design of novel DeepFake detection algorithms, and is in done as part of the ELSA and ELIAS EU projects.

🟩 Can lead to research publications

Posted: 04/06/2025

Connecting AI and HPC

As part of the MINERVA EU project, we wish building approaches to ensure that European AI communities can harness the power of the EuroHPC systems. The thesis will investigate large-scale architectures, community needs across Europe, develop training courses and best practices for leveraging HPC systems.

🟩 Can lead to research publications

Posted: 04/05/2025

Design of SVG generation algorithms

Transformer-based architectures for generating images in SVG format, thus with infinite resolution, starting from textual prompts. Models can be designed on the basis of LLM architectures, and they might employ diffusion-based strategies.

🟩 Can lead to research publications

Posted: 04/05/2025

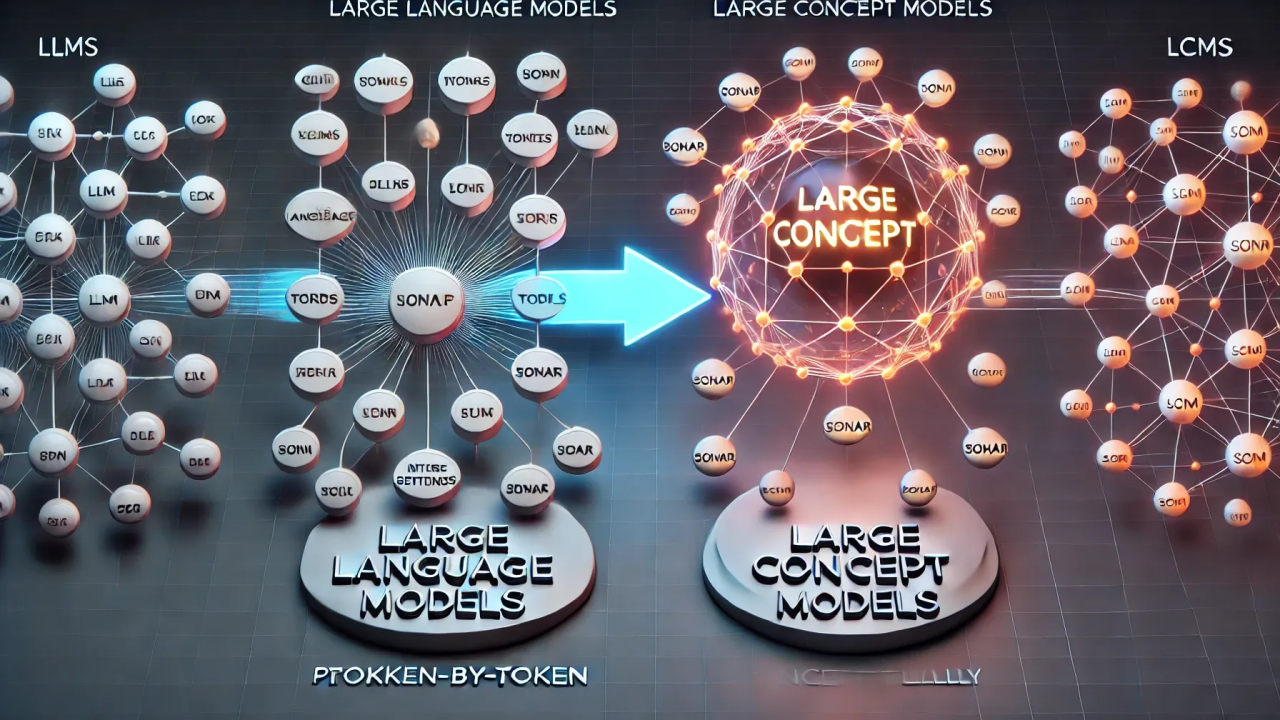

Multimodal Large Concept Models

Large Concept Models (LCM) are a compelling alternative to traditional token-based LLMs, where tokens are replaced by dense vectors (representing higher level "concepts") as provided by a textual embedding space. This paradigm shift has, so far, being investigated for the case of text. In this thesis we aim at explore the frontier of building a Multimodal version of an LCM and extending the idea of conceptualization to both text and images.

A relevant impact is expected in terms of scientifc publications.

🟩 Can lead to research publications

Posted: 02/24/2025

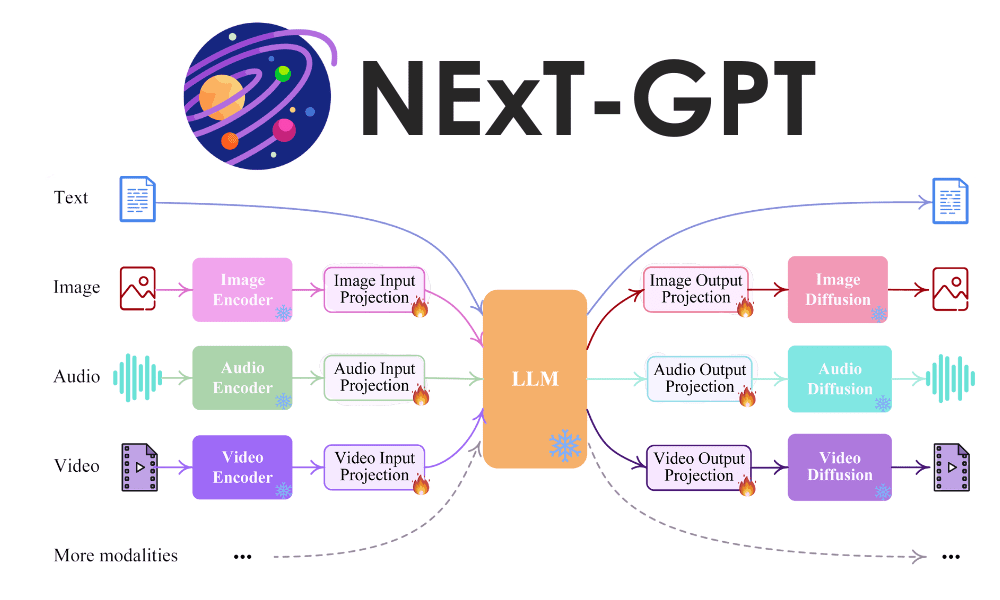

Large-Scale Multimodal Models

Large Multimodal Models integrate Vision and Language towards the achievement of human-like conversation abilities. The theses in this context explore novel architectures, multimodal connectors, novel training strategies and evaluation scenarios.

🟩 Can lead to research publications

Posted: 02/24/2025