Salimeh Yasaei Sekeh

Paper download is intended for registered attendees only, and is

subjected to the IEEE Copyright Policy. Any other use is strongly forbidden.

Papers from this author

MINT: Deep Network Compression Via Mutual Information-Based Neuron Trimming

Madan Ravi Ganesh, Jason Corso, Salimeh Yasaei Sekeh

Auto-TLDR; Mutual Information-based Neuron Trimming for Deep Compression via Pruning

Abstract Slides Poster Similar

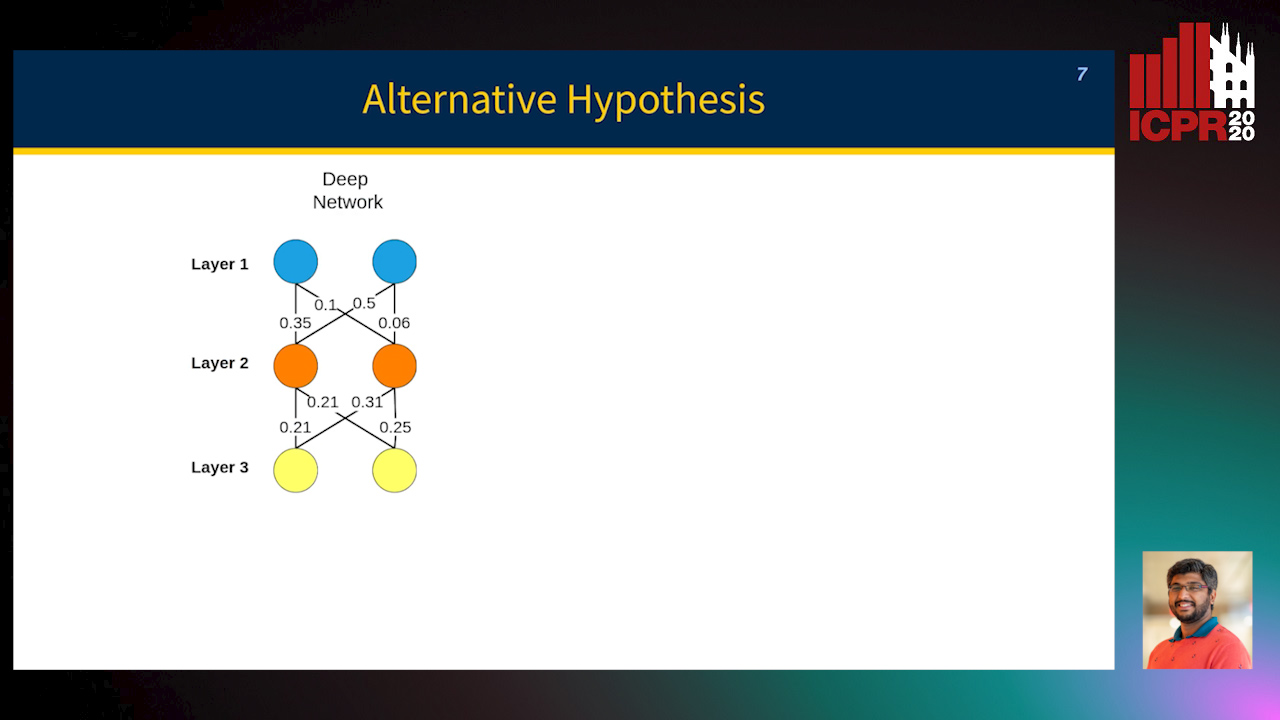

Most approaches to deep neural network compression via pruning either evaluate a filter’s importance using its weights or optimize an alternative objective function with sparsity constraints. While these methods offer a useful way to approximate contributions from similar filters, they often either ignore the dependency between layers or solve a more difficult optimization objective than standard cross-entropy. Our method, Mutual Information-based Neuron Trimming (MINT), approaches deep compression via pruning by enforcing sparsity based on the strength of the relationship between filters of adjacent layers, across every pair of layers. The relationship is calculated using conditional geometric mutual information which evaluates the amount of similar information exchanged between the filters using a graph-based criterion. When pruning a network, we ensure that retained filters contribute the majority of the information towards succeeding layers which ensures high performance. Our novel approach outperforms existing state-of-the-art compression-via-pruning methods on the standard benchmarks for this task: MNIST, CIFAR-10, and ILSVRC2012, across a variety of network architectures. In addition, we discuss our observations of a common denominator between our pruning methodology’s response to adversarial attacks and calibration statistics when compared to the original network.