Raghavendra Ramachandra

Papers from this author

Handwritten Signature and Text Based User Verification Using Smartwatch

Raghavendra Ramachandra, Sushma Venkatesh, Raja Kiran, Christoph Busch

Auto-TLDR; A novel technique for user verification using a smartwatch based on writing pattern or signing pattern

Abstract Slides Poster Similar

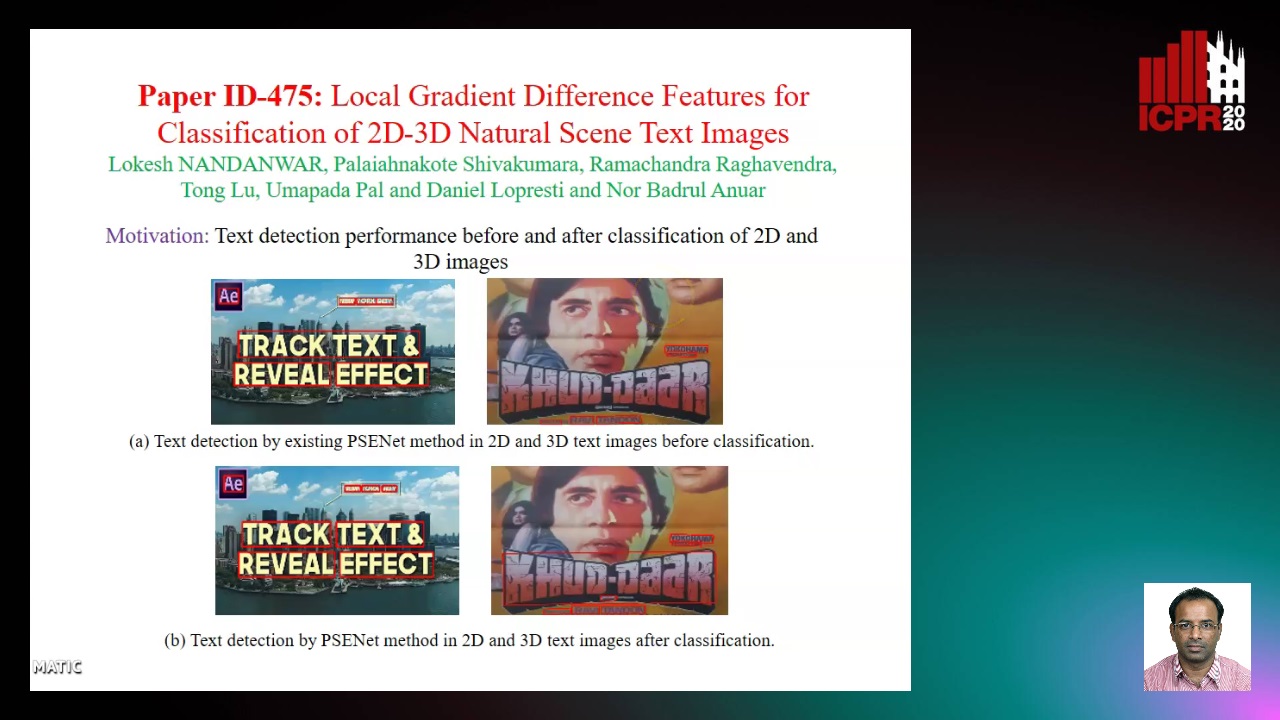

Local Gradient Difference Based Mass Features for Classification of 2D-3D Natural Scene Text Images

Lokesh Nandanwar, Shivakumara Palaiahnakote, Raghavendra Ramachandra, Tong Lu, Umapada Pal, Daniel Lopresti, Nor Badrul Anuar

Auto-TLDR; Classification of 2D and 3D Natural Scene Images Using COLD

Abstract Slides Poster Similar