Filter Pruning Using Hierarchical Group Sparse Regularization for Deep Convolutional Neural Networks

Auto-TLDR; Hierarchical Group Sparse Regularization for Sparse Convolutional Neural Networks

Similar papers

Channel Planting for Deep Neural Networks Using Knowledge Distillation

Kakeru Mitsuno, Yuichiro Nomura, Takio Kurita

Auto-TLDR; Incremental Training for Deep Neural Networks with Knowledge Distillation

Abstract Slides Poster Similar

HFP: Hardware-Aware Filter Pruning for Deep Convolutional Neural Networks Acceleration

Fang Yu, Chuanqi Han, Pengcheng Wang, Ruoran Huang, Xi Huang, Li Cui

Auto-TLDR; Hardware-Aware Filter Pruning for Convolutional Neural Networks

Abstract Slides Poster Similar

Slimming ResNet by Slimming Shortcut

Donggyu Joo, Doyeon Kim, Junmo Kim

Auto-TLDR; SSPruning: Slimming Shortcut Pruning on ResNet Based Networks

Abstract Slides Poster Similar

Learning to Prune in Training via Dynamic Channel Propagation

Shibo Shen, Rongpeng Li, Zhifeng Zhao, Honggang Zhang, Yugeng Zhou

Auto-TLDR; Dynamic Channel Propagation for Neural Network Pruning

Abstract Slides Poster Similar

A Discriminant Information Approach to Deep Neural Network Pruning

Auto-TLDR; Channel Pruning Using Discriminant Information and Reinforcement Learning

Abstract Slides Poster Similar

Softer Pruning, Incremental Regularization

Linhang Cai, Zhulin An, Yongjun Xu

Auto-TLDR; Asymptotic SofteR Filter Pruning for Deep Neural Network Pruning

Abstract Slides Poster Similar

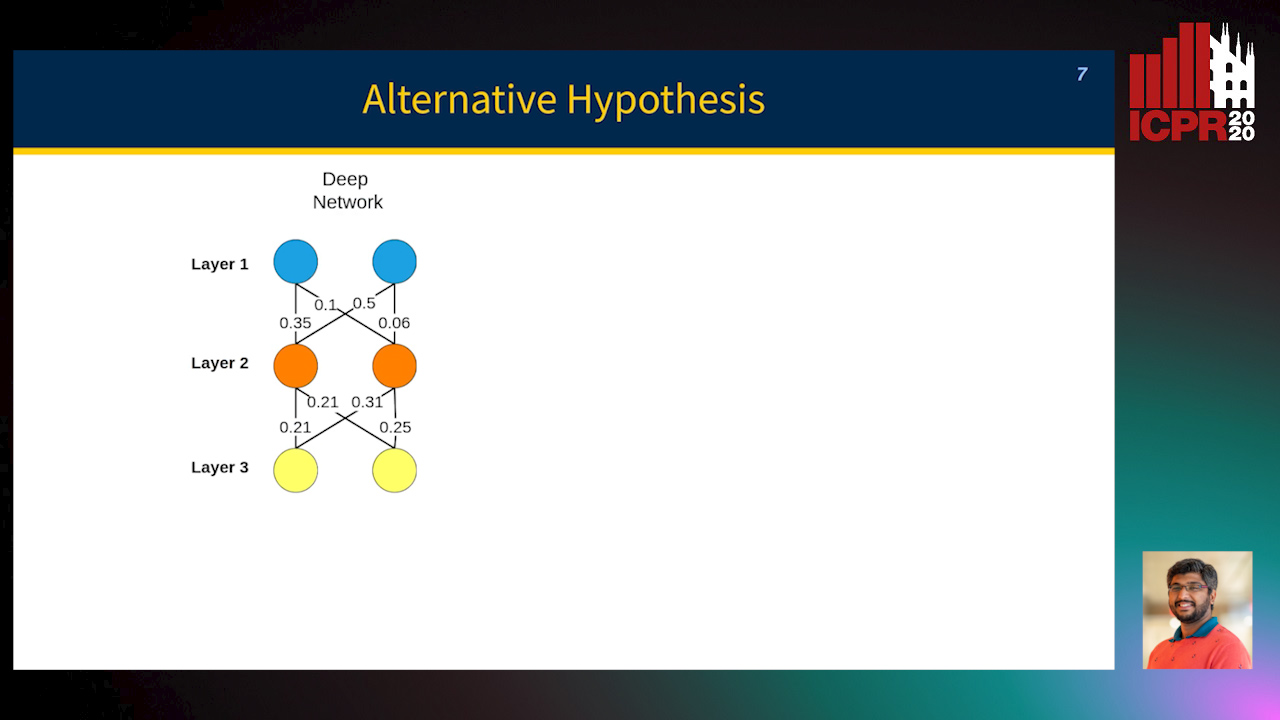

MINT: Deep Network Compression Via Mutual Information-Based Neuron Trimming

Madan Ravi Ganesh, Jason Corso, Salimeh Yasaei Sekeh

Auto-TLDR; Mutual Information-based Neuron Trimming for Deep Compression via Pruning

Abstract Slides Poster Similar

Activation Density Driven Efficient Pruning in Training

Timothy Foldy-Porto, Yeshwanth Venkatesha, Priyadarshini Panda

Auto-TLDR; Real-Time Neural Network Pruning with Compressed Networks

Abstract Slides Poster Similar

On the Information of Feature Maps and Pruning of Deep Neural Networks

Mohammadreza Soltani, Suya Wu, Jie Ding, Robert Ravier, Vahid Tarokh

Auto-TLDR; Compressing Deep Neural Models Using Mutual Information

Abstract Slides Poster Similar

Progressive Gradient Pruning for Classification, Detection and Domain Adaptation

Le Thanh Nguyen-Meidine, Eric Granger, Marco Pedersoli, Madhu Kiran, Louis-Antoine Blais-Morin

Auto-TLDR; Progressive Gradient Pruning for Iterative Filter Pruning of Convolutional Neural Networks

Abstract Slides Poster Similar

Learning Sparse Deep Neural Networks Using Efficient Structured Projections on Convex Constraints for Green AI

Michel Barlaud, Frederic Guyard

Auto-TLDR; Constrained Deep Neural Network with Constrained Splitting Projection

Abstract Slides Poster Similar

Neuron-Based Network Pruning Based on Majority Voting

Ali Alqahtani, Xianghua Xie, Ehab Essa, Mark W. Jones

Auto-TLDR; Large-Scale Neural Network Pruning using Majority Voting

Abstract Slides Poster Similar

Compression of YOLOv3 Via Block-Wise and Channel-Wise Pruning for Real-Time and Complicated Autonomous Driving Environment Sensing Applications

Jiaqi Li, Yanan Zhao, Li Gao, Feng Cui

Auto-TLDR; Pruning YOLOv3 with Batch Normalization for Autonomous Driving

Abstract Slides Poster Similar

Speeding-Up Pruning for Artificial Neural Networks: Introducing Accelerated Iterative Magnitude Pruning

Marco Zullich, Eric Medvet, Felice Andrea Pellegrino, Alessio Ansuini

Auto-TLDR; Iterative Pruning of Artificial Neural Networks with Overparametrization

Abstract Slides Poster Similar

Exploiting Non-Linear Redundancy for Neural Model Compression

Muhammad Ahmed Shah, Raphael Olivier, Bhiksha Raj

Auto-TLDR; Compressing Deep Neural Networks with Linear Dependency

Abstract Slides Poster Similar

Exploiting Elasticity in Tensor Ranks for Compressing Neural Networks

Jie Ran, Rui Lin, Hayden Kwok-Hay So, Graziano Chesi, Ngai Wong

Auto-TLDR; Nuclear-Norm Rank Minimization Factorization for Deep Neural Networks

Abstract Slides Poster Similar

Attention Based Pruning for Shift Networks

Ghouthi Hacene, Carlos Lassance, Vincent Gripon, Matthieu Courbariaux, Yoshua Bengio

Auto-TLDR; Shift Attention Layers for Efficient Convolutional Layers

Abstract Slides Poster Similar

Norm Loss: An Efficient yet Effective Regularization Method for Deep Neural Networks

Theodoros Georgiou, Sebastian Schmitt, Thomas Baeck, Wei Chen, Michael Lew

Auto-TLDR; Weight Soft-Regularization with Oblique Manifold for Convolutional Neural Network Training

Abstract Slides Poster Similar

WeightAlign: Normalizing Activations by Weight Alignment

Xiangwei Shi, Yunqiang Li, Xin Liu, Jan Van Gemert

Auto-TLDR; WeightAlign: Normalization of Activations without Sample Statistics

Abstract Slides Poster Similar

CQNN: Convolutional Quadratic Neural Networks

Auto-TLDR; Quadratic Neural Network for Image Classification

Abstract Slides Poster Similar

Towards Low-Bit Quantization of Deep Neural Networks with Limited Data

Yong Yuan, Chen Chen, Xiyuan Hu, Silong Peng

Auto-TLDR; Low-Precision Quantization of Deep Neural Networks with Limited Data

Abstract Slides Poster Similar

Dynamic Multi-Path Neural Network

Yingcheng Su, Yichao Wu, Ken Chen, Ding Liang, Xiaolin Hu

Auto-TLDR; Dynamic Multi-path Neural Network

How Does DCNN Make Decisions?

Yi Lin, Namin Wang, Xiaoqing Ma, Ziwei Li, Gang Bai

Auto-TLDR; Exploring Deep Convolutional Neural Network's Decision-Making Interpretability

Abstract Slides Poster Similar

Compact CNN Structure Learning by Knowledge Distillation

Waqar Ahmed, Andrea Zunino, Pietro Morerio, Vittorio Murino

Auto-TLDR; Knowledge Distillation for Compressing Deep Convolutional Neural Networks

Abstract Slides Poster Similar

Is the Meta-Learning Idea Able to Improve the Generalization of Deep Neural Networks on the Standard Supervised Learning?

Auto-TLDR; Meta-learning Based Training of Deep Neural Networks for Few-Shot Learning

Abstract Slides Poster Similar

Generalization Comparison of Deep Neural Networks Via Output Sensitivity

Mahsa Forouzesh, Farnood Salehi, Patrick Thiran

Auto-TLDR; Generalization of Deep Neural Networks using Sensitivity

VPU Specific CNNs through Neural Architecture Search

Ciarán Donegan, Hamza Yous, Saksham Sinha, Jonathan Byrne

Auto-TLDR; Efficient Convolutional Neural Networks for Edge Devices using Neural Architecture Search

Abstract Slides Poster Similar

Selecting Useful Knowledge from Previous Tasks for Future Learning in a Single Network

Feifei Shi, Peng Wang, Zhongchao Shi, Yong Rui

Auto-TLDR; Continual Learning with Gradient-based Threshold Threshold

Abstract Slides Poster Similar

Beyond Cross-Entropy: Learning Highly Separable Feature Distributions for Robust and Accurate Classification

Arslan Ali, Andrea Migliorati, Tiziano Bianchi, Enrico Magli

Auto-TLDR; Gaussian class-conditional simplex loss for adversarial robust multiclass classifiers

Abstract Slides Poster Similar

Efficient Online Subclass Knowledge Distillation for Image Classification

Maria Tzelepi, Nikolaos Passalis, Anastasios Tefas

Auto-TLDR; OSKD: Online Subclass Knowledge Distillation

Abstract Slides Poster Similar

Fine-Tuning DARTS for Image Classification

Muhammad Suhaib Tanveer, Umar Karim Khan, Chong Min Kyung

Auto-TLDR; Fine-Tune Neural Architecture Search using Fixed Operations

Abstract Slides Poster Similar

Contextual Classification Using Self-Supervised Auxiliary Models for Deep Neural Networks

Sebastian Palacio, Philipp Engler, Jörn Hees, Andreas Dengel

Auto-TLDR; Self-Supervised Autogenous Learning for Deep Neural Networks

Abstract Slides Poster Similar

MaxDropout: Deep Neural Network Regularization Based on Maximum Output Values

Claudio Filipi Gonçalves Santos, Danilo Colombo, Mateus Roder, Joao Paulo Papa

Auto-TLDR; MaxDropout: A Regularizer for Deep Neural Networks

Abstract Slides Poster Similar

Meta Soft Label Generation for Noisy Labels

Auto-TLDR; MSLG: Meta-Learning for Noisy Label Generation

Abstract Slides Poster Similar

ResNet-Like Architecture with Low Hardware Requirements

Elena Limonova, Daniil Alfonso, Dmitry Nikolaev, Vladimir V. Arlazarov

Auto-TLDR; BM-ResNet: Bipolar Morphological ResNet for Image Classification

Abstract Slides Poster Similar

Operation and Topology Aware Fast Differentiable Architecture Search

Shahid Siddiqui, Christos Kyrkou, Theocharis Theocharides

Auto-TLDR; EDARTS: Efficient Differentiable Architecture Search with Efficient Optimization

Abstract Slides Poster Similar

Improving Batch Normalization with Skewness Reduction for Deep Neural Networks

Pak Lun Kevin Ding, Martin Sarah, Baoxin Li

Auto-TLDR; Batch Normalization with Skewness Reduction

Abstract Slides Poster Similar

Feature-Dependent Cross-Connections in Multi-Path Neural Networks

Dumindu Tissera, Kasun Vithanage, Rukshan Wijesinghe, Kumara Kahatapitiya, Subha Fernando, Ranga Rodrigo

Auto-TLDR; Multi-path Networks for Adaptive Feature Extraction

Abstract Slides Poster Similar

E-DNAS: Differentiable Neural Architecture Search for Embedded Systems

Javier García López, Antonio Agudo, Francesc Moreno-Noguer

Auto-TLDR; E-DNAS: Differentiable Architecture Search for Light-Weight Networks for Image Classification

Abstract Slides Poster Similar

Improved Residual Networks for Image and Video Recognition

Ionut Cosmin Duta, Li Liu, Fan Zhu, Ling Shao

Auto-TLDR; Residual Networks for Deep Learning

Abstract Slides Poster Similar

A Close Look at Deep Learning with Small Data

Auto-TLDR; Low-Complex Neural Networks for Small Data Conditions

Abstract Slides Poster Similar

Can Data Placement Be Effective for Neural Networks Classification Tasks? Introducing the Orthogonal Loss

Brais Cancela, Veronica Bolon-Canedo, Amparo Alonso-Betanzos

Auto-TLDR; Spatial Placement for Neural Network Training Loss Functions

Abstract Slides Poster Similar

Resource-efficient DNNs for Keyword Spotting using Neural Architecture Search and Quantization

David Peter, Wolfgang Roth, Franz Pernkopf

Auto-TLDR; Neural Architecture Search for Keyword Spotting in Limited Resource Environments

Abstract Slides Poster Similar

Fast and Efficient Neural Network for Light Field Disparity Estimation

Auto-TLDR; Improving Efficient Light Field Disparity Estimation Using Deep Neural Networks

Abstract Slides Poster Similar

Filtered Batch Normalization

András Horváth, Jalal Al-Afandi

Auto-TLDR; Batch Normalization with Out-of-Distribution Activations in Deep Neural Networks

Abstract Slides Poster Similar

Not All Domains Are Equally Complex: Adaptive Multi-Domain Learning

Ali Senhaji, Jenni Karoliina Raitoharju, Moncef Gabbouj, Alexandros Iosifidis

Auto-TLDR; Adaptive Parameterization for Multi-Domain Learning

Abstract Slides Poster Similar

Hcore-Init: Neural Network Initialization Based on Graph Degeneracy

Stratis Limnios, George Dasoulas, Dimitrios Thilikos, Michalis Vazirgiannis

Auto-TLDR; K-hypercore: Graph Mining for Deep Neural Networks

Abstract Slides Poster Similar

Transitional Asymmetric Non-Local Neural Networks for Real-World Dirt Road Segmentation

Auto-TLDR; Transitional Asymmetric Non-Local Neural Networks for Semantic Segmentation on Dirt Roads

Abstract Slides Poster Similar